|

They also regularly pressure-test their software against a variety of use cases.

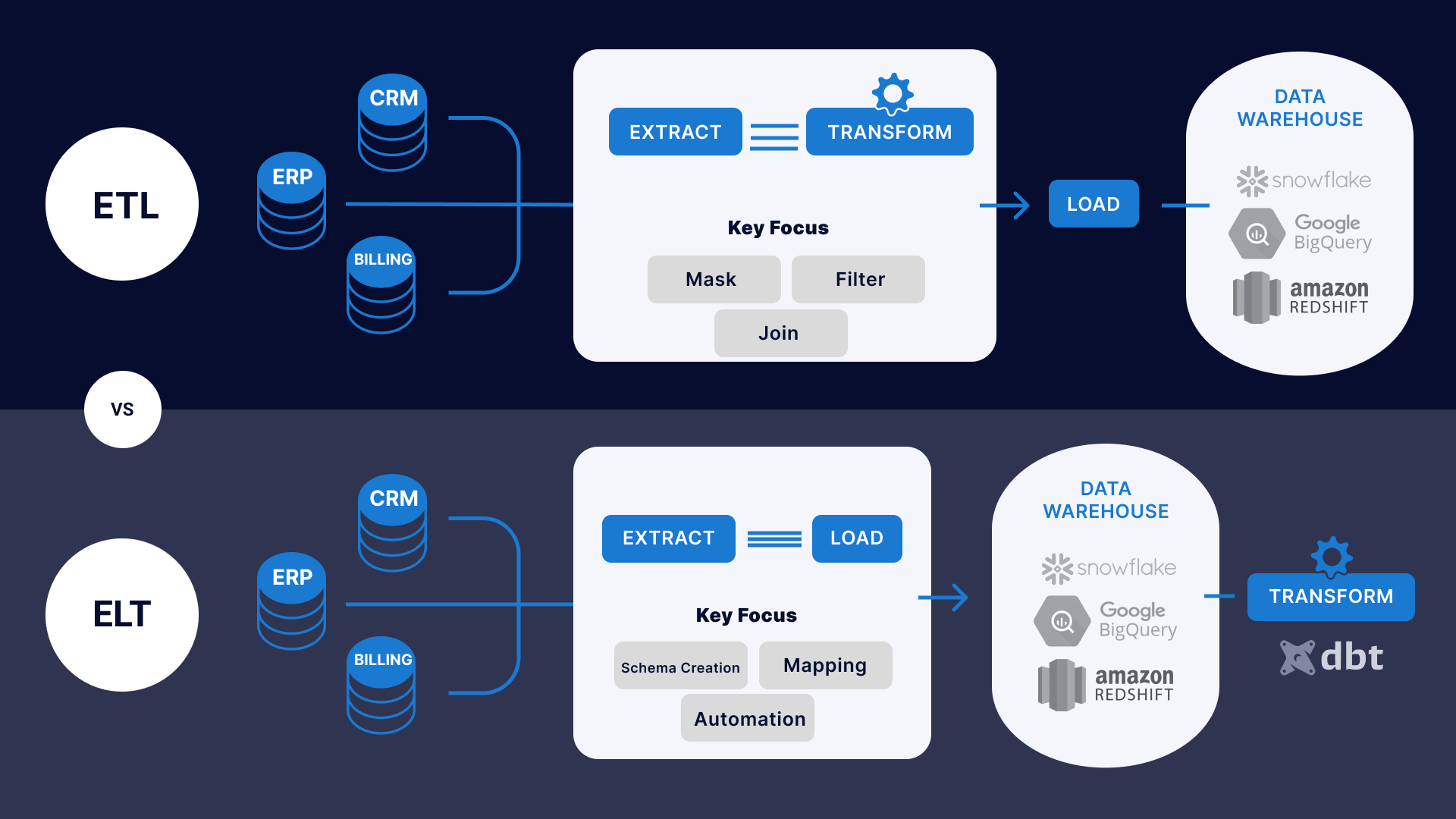

Providers of purpose-built, fully managed ELT have done comprehensive research to understand every idiosyncrasy of the data sources they connect to. In these scenarios, delays such as those caused by the ETL method, where data is transformed before being sent to storage, have big consequences.ĮLT is ideal when analysts and end users need to manipulate and gain insights in near real-time, by utilizing change data capture. This is a significant advantage when large amounts of data are generated in a short time, such as streaming or stock market data. Unlike in ETL, they don’t have to wait for transformation to be completed before they can explore the data. So analysts get faster access to the information they need. Read more about the differences between ETL and ELT in detail.ĮLT separates the loading and transformation processes, and data is immediately loaded to a cloud-based data warehouse or data lake.

Without constraints on these critical data integration elements, there is no reason to follow an integration architecture that focuses heavily on preserving those resources. But the brittleness of ETL turns the opportunities offered by new data into a heavy technical burden.īy contrast, the ELT process extracts and loads raw data with no assumptions about how the data will be used.ĮLT is a product of the modern cloud and the plummeting storage, computation and bandwidth costs in recent decades. This process obscures the raw, original values and means that engineers must rebuild the entire pipeline whenever the source changes or analysts need a new data model.Ĭonsequently, stoppages and extra engineering resources are required whenever your data source adds a new field or your company needs a new metric.Ĭompanies must add additional data sources, collect a larger volume of data and track new metrics to propel business growth. These operations can be complicated and usually obscure or alter the raw values from the source.ĭespite ETL’s success, the critical weakness of traditional ETL tools, such as Pentaho, Informatica and SSIS, is that data is transformed before it is loaded.

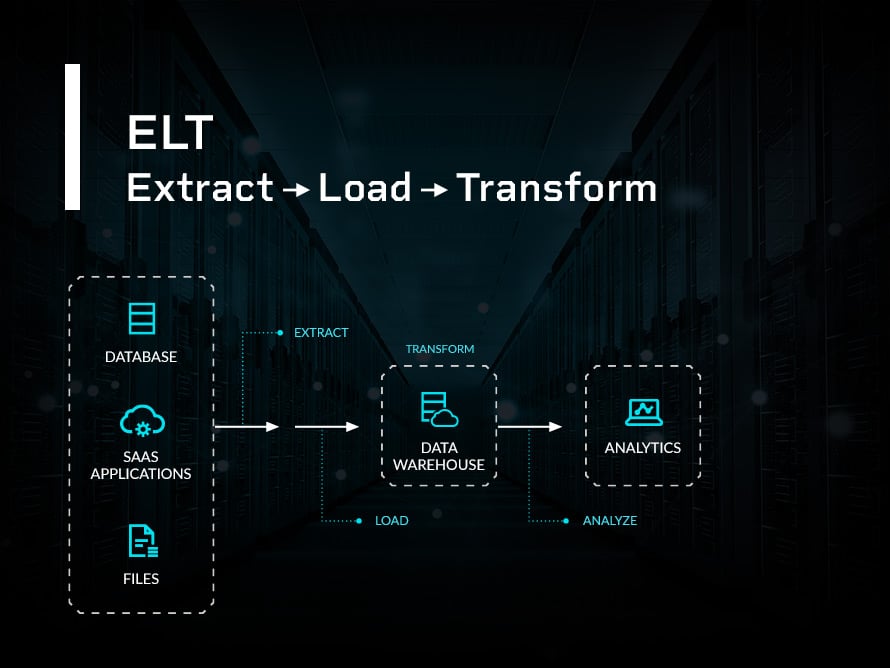

This process includes all operations that alter or create values, such as cleaning, calculating, translating, authenticating, encrypting or formatting data. Analysts use transformations to decipher raw data and gain valuable insights. Transform: The data in storage is then transformed using schema.Since ELT is faster than ETL and loads data before transformation, companies can use it for fast processing during work or peak customer hours. Load: The exported data is transferred from the staging area to centralized storage like a data lake or cloud data warehouse.Once exported, the data is moved to a staging area. This data can be structured or unstructured. Extract: Raw data is copied or exported from sources like applications, web pages, spreadsheets, SQL or NoSQL databases and other data sources.In recent decades, these restrictions are no longer present, and ELT can help organizations collect, refine and accurately analyze large-scale data with much less menial effort. Since storage and bandwidth were finite and restricted in the 70s, ETL focuses on minimizing the usage of these resources.

The latter was invented in the 1970s and catered to the technical limitations of that time. Moreover, ELT pipelines can be built and managed using cloud-based software like Fivetran.ĮLT is a newer data integration method than ETL (Extract, Transform, Load). Extract, load, transform (ELT) is a data integration process that moves data directly from source to destination for analysts to transform as needed.ĮLT streamlines data pipeline creation and maintenance.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed